|

12/22/2023 0 Comments Xz compress

Beating the compression performance of xz, or has.Clarifications to xz compression article.Measuring execution performance of C++ exceptions.Using a smart card for decryption from the command.A Python extension module using C, C++, FORTRAN an.

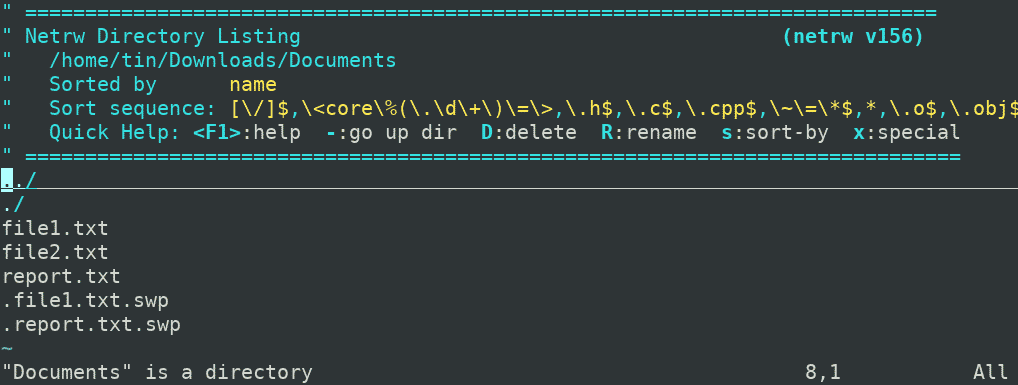

Testing exception vs error code behaviour with rea.I've seen widely used CPU benchmarks where the compression tests typically yield worse results with server class many-core hardware (vs 2-4 turbo core gaming CPUs). It's actually quite interesting that somebody spends time thinking about parallel compression. Why waste money on bogus hardware upgrades? Better algorithms are clearly now the lowest hanging fruit with lots of potential. You just install an update and instantly get better performance. Compared to new hardware, software upgrades are 'free'. Given that most people really do expect constant perf improvements in all technology and are willing to spend lots of money on new, faster hardware with each new year, I don't quite understand the criticism here. Heck, we could even switch back to simpler, in-order cores to make room for a larger core count, yet still achieve better performance than incremental CPU gate-level optimizations in 100 years. Like your graphs show, we could achieve 10000% NOW with better algorithms. I find it really unlikely that we could even get 200% speedup with the current hardware development style during the next *100* years. Most improvements come from larger (turbo) clock frequencies, not from improved IPC. Currently it seems that CPUs are pretty stuck with 1-3% annual perf upgrades with single threaded programs. Haswell and later generations provide somewhat better encryption perf due to new AVX instructions, but overall the situation is quite bad if you don't parallelize. The only place it's improving perf is PXE/NFS boot over 100 Mbps ethernet. For instance, squashfs/xz is clearly slowing down disk performance on most machines. LZ4/LZO are pretty much the only available algorithms that seem transparent enough not to slow everything down. If you combine that with transparent disk compression, even the 600 MB/s SATA disks might be fast enough to congest the CPU. ZIP file can contain multiple files, folders. ZIP is a data compressor that uses lossless data compression algorithm to compress data. Amongst many others, we support ZIP, RAR, TAR.GZ and 7Z. For instance, my old i7 3770k system (2012) can only encrypt/decrypt up to 2000/2000 MB/s (multi-core parallel AES XTS 256b). CloudConvert converts your archives online. Many users also encrypt their file system, which affects the disk performance. So, it's pretty obvious that things have become CPU/memory bound again. We should definitely expect more and rapid improvements in the near future (e.g. They had specs like:ĭisk bandwidth has improved over 3000% and IOPS over 300000%. tar -cf - directory/ | (pv -s $(du -sb directory/ | awk '') -p -timer -rate -bytes > tarfile.Some notes about disk performance: Around 5-10 years ago most desktop users bought budget 5400-7200 rpm disks. Or if you want you can do it separately with a progress bar like this. On the newer versions of tar you can also do the following too XZ_OPT="-9e -threads=8" tar cJf directory Let’s have a directory with a lot of files and subdirectories, to compress it all we would do tar -cf - directory/ | xz -9e -threads=8 -c - > This should give you a newer version of XZ that supports multi threading. echo "deb debian main" | sudo tee /etc/apt//packages-matoski-com.list Since the XZ utils in Debian/Ubuntu is an old version I’ve created my own backports that I can use. And as XZ compresses single files we are gonna have to use TAR to do itĪnd since the newer version of XZ supports multi threading, we can speed up compression quite a bit I’ve needed to compress several files using XZ. I’ve had an 16 GB SQL dump, and it managed to compress it down to 263 MB. The same command can be used to extract tar archives compressed with other algorithms, such as. tar auto-detects compression type and extracts the archive. XZ is one of the best compression tools I’ve seen, it’s compressed files so big to a fraction of their size. To extract a tar.xz file, invoke the tar command with the -extract ( -x) option and specify the archive file name after the -f option: tar -xf.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed